Description:

This work presents a multimodal sonification system that combines video with sound synthesis generated from motion capture data. Such a system allows for fast and efficient exploration of musicians’ ancillary gestural data. Sonification complements conventional videos by stressing certain details that could escape one’s attention if not displayed using an appropriate representation. The main objective of this project is to provide a research tool designed for people that are not necessarily familiar with signal processing or computer sciences. This tool is capable of easily generating meaningful sonifications thanks to dedicated mapping strategies.

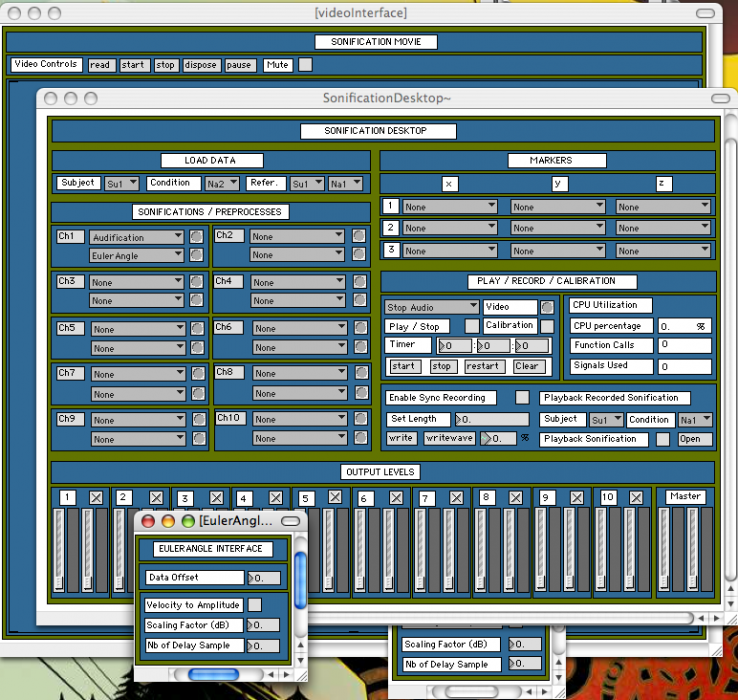

The Sonification Desktop

On the one hand, the dimensionality reduction of data obtained from motion capture systems such as the Vicon is fundamental as it may exceed 350 signals describing gestures. For that reason, a Principal Component Analysis is used to objectively reduce the number of signals to a subset that conveys the most significant gesture information in terms of signal variance. On the other hand, movement data present high variability depending on the subjects: additional control parameters for sound synthesis are offered to restrain the sonification to the significant gestures, easily perceivable visually in terms of speed and path distance. The following figure presents an example of a control signal used to drive sound synthesis parameters (left/right knee angles) and their related principal components.

Principal Components

Then, signal conditioning techniques are proposed to adapt the control signals to sound synthesis parameter requirements or emphasize certain gesture characteristics that one finds important. All those data treatments are performed in real-time within one unique environment, minimizing data manipulation and facilitating efficient sonification designs. The real-time process also allows for an instantaneous system reset to parameter changes and process selection so that the user can easily and interactively manipulate data, design and adjust sonification strategies.

IDMIL Participants:

External Participants:

Vincent Verfaille

Oswald Quek

Research Areas:

Funding:

- NSERC

Publications:

- Michael Winters, R., Savard, A., Verfaille, V., Wanderley, M. M. (2012). A Sonification Tool for the Analysis of Large Databases of Expressive Gesture. In The International Journal of Multimedia & Its Applications (IJMA) (pp. 13–26). AIRCC Publishing Corporation.

- Michael Winters, R., Wanderley, M. M. (2012). New Directions for the Sonification of Expressive Movement in Music Performance. In International Conference on Auditory Display. Atlanta, Georgia, USA.

- Michael Winters IV, R. (2013). Exploring Music through Sound: Sonification of Emotion, Gesture, and Corpora. In M.A. Thesis,. McGill University (pp. 131). Montreal, Canada.

- Savard, A. (2009). When Gestures are Perceived through Sounds: A Framework for Sonification of Musicians’ Ancillary Gestures. In M.A. Thesis, McGill University (pp. 125). Montreal, Canada.

- Verfaille, V., Quek, O., Wanderley, M. M. (2006). Sonification of Musician’s Ancillary Gestures. In Proceedings of the 2006 International Conference on Auditory Display (ICAD2006) (pp. 194–197). London, England.

Images:

Press:

Video

Example available from Vimeo: https://vimeo.com/32054289

Source Code and Use Examples

Source code and demonstration videos are kept on a public GitHub account: https://github.com/IDMIL/SonificationDesktop