Description:

Sonification of Corpora

Large databases of music (“corpora”), are becoming increasingly abundant. Publicly available research tools (e.g. music21, VIS) are being developed to work with this highly structured data format. Sometimes data sets are so large, that relevant information is not obvious visually. Patterns are “hidden” in the data, but through sonification can be easily recognized. You can also use it to identify data features that differentiate composers, genres, or stylistic periods that you may not have been aware of. Sonification provides a very detailed temporal display, which may be missed in visual techniques like histograms.

Monteverdi, Bach, and Beethoven at 10000 notes per second

This project began in a Music Information Retrieval class w/ Ichiro Fujinaga, I used music21 to extracted pitches from corpora of Monteverdi, Bach, and Beethoven. I then sonified these pitches in SuperCollider using a pitch-based mapping strategy. A profound and immediate result were that the composers could be easily differentiated, event at speeds of 10,000 notes per second. I extended this technique to display data content as well, and tested it in a perceptual experiment. More information on that project can be found on my website, and in Chapter 7 of my Master’s Thesis.

Working on the ELVIS Project

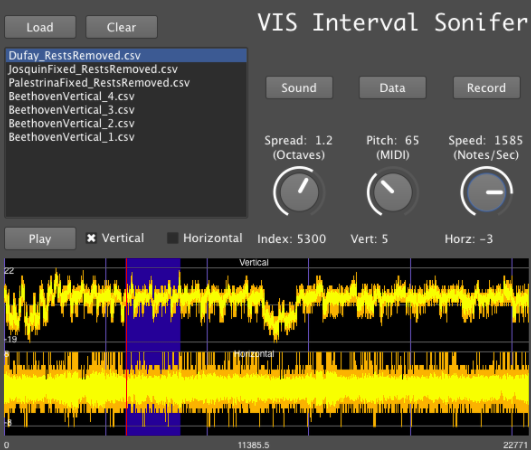

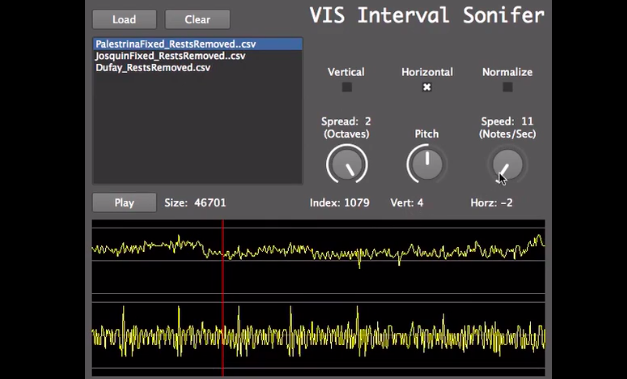

This pitch-based mapping strategy was extended as part of the ELVIS project. A GUI was created that allowed the user to interact with data like they might interact with a soundfile. You can zoom in, zoom out, scroll, listen, and record at whatever speed your computer can handle. I wrote about this idea on the SIMSSA Blog, and for the code-inclined, the most recent version of the SuperCollider Code, application, and examples on are on my github page.

Standalone GUI

The completed standalone GUI can be found on the ELVIS Project sonification page.

IDMIL Participants:

Research Areas:

Press:

- Leenders-Chen, V. “The Big Picture on Big Data.” McGill Headway Magazine, Vol. 8(1), 2014.

Video:

VIS Prototype

Vimeo: https://vimeo.com/79013561